VORTIQ-X launches real-time child exploitation detector

Fri, 15th May 2026 (Today)

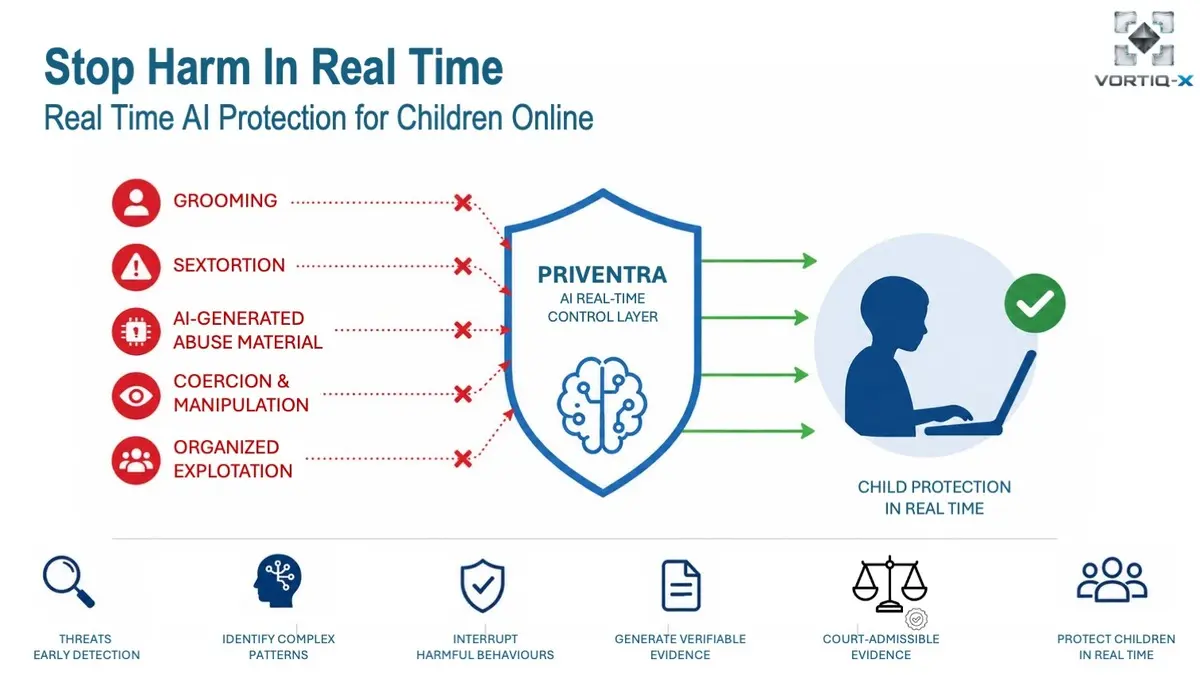

VORTIQ-X Consilium has launched PRIVENTRA, a system intended to detect risks of online child exploitation in real time. The announcement comes as Irish authorities and child protection groups warn of rising levels of grooming, sextortion and abuse involving minors online.

The system is designed to operate within digital platforms and analyse behavioural patterns as interactions unfold. Its aim is to identify early signs of grooming, coercion and sextortion before harm occurs, rather than relying solely on the later discovery of illegal material.

The launch comes amid growing concern in Ireland about the scale of online threats facing children. Research from CyberSafeKids found that 65% of Irish children said they had been contacted online by someone they did not know, while Irish reporting figures showed more than 49,000 reports linked to child sexual abuse material during 2025.

International data has also pointed to a worsening picture. The Internet Watch Foundation said 2025 was the worst year on record for online child sexual abuse material, identifying more than 291,000 webpages containing such material, alongside a sharp rise in AI-generated abuse imagery and video.

An Garda Síochána has warned that offenders are increasingly using false identities, encrypted messaging services, gaming platforms and social media to build trust with minors before exploitation escalates. Irish child protection organisations have also highlighted growing risks linked to online coercion, manipulation and sexual extortion.

Real-time focus

According to VORTIQ-X, PRIVENTRA is built around behavioural indicators rather than a model based only on known illegal content. That approach is intended to help identify suspicious interactions even when the content itself has not previously been flagged or catalogued by moderation systems.

The system is intended to detect patterns linked to early-stage grooming, sextortion attempts, manipulation, coercion and other emerging signs of exploitation. It can also produce records that may be used for investigative purposes.

Peter Källviks, Senior Advisor at VORTIQ-X, said existing responses often come too late to prevent harm.

"What we are seeing is not a lack of regulation - it is a lack of real-time intervention," Källviks said.

"In practice, harmful interactions can escalate in minutes, while systems designed to detect them often respond much later. That gap is where the harm occurs."

Shared patterns

VORTIQ-X argues that several forms of online exploitation follow similar behavioural sequences. In its view, grooming, sextortion and recruitment into criminal activity may appear to be separate issues, but each can involve initial contact, trust-building, manipulation and escalation.

That framing is central to how PRIVENTRA has been presented. Rather than treating each threat type as a separate moderation problem, the company says it looks for common markers in the development of exploitative behaviour.

"These cases are often treated separately, but the underlying dynamics are remarkably similar - contact, trust-building, manipulation and escalation," Källviks said.

"Understanding those behavioural sequences is key to intervening before harm occurs."

Oversight questions

Technology that monitors online interactions in real time is likely to raise questions about privacy, proportionality and platform responsibility, particularly in Ireland, where the Data Protection Commission serves as the lead European Union regulator for several major technology groups.

VORTIQ-X said the system is designed to operate with limited data collection and to activate only when pre-defined behavioural risk thresholds are reached. Decisions on escalation and legal action would remain with the platform operator or the relevant authority.

Jacqueline Saad, Co-founder and Chief Executive Officer of VORTIQ-X, said the company does not view the system as a broad surveillance tool.

"This is not about surveillance," Saad said.

"It is about ensuring that platforms are capable of recognising dangerous behavioural patterns as they emerge, rather than after harm has already occurred. The responsibility for escalation and legal action remains with the platform operator or relevant authority."

Irish backdrop

The Irish market offers a notable test case because of the country's role in digital regulation and the presence of major technology platforms with European operations based there. At the same time, domestic agencies and charities have reported sustained pressure from online abuse, including cases involving AI-generated material.

Hotline.ie, Ireland's national reporting service for illegal online content, has continued to receive reports involving child sexual abuse material, child grooming activity and online sexual extortion. Rising reports linked to AI-generated abuse material have added another layer of complexity for investigators and moderators.

Across Europe, law enforcement bodies and child protection organisations have warned that AI-generated abuse imagery, coercion and sextortion are becoming harder to detect through traditional moderation methods. That has sharpened debate over whether platforms need tools that focus on behavioural patterns as well as the content being exchanged.

VORTIQ-X said it is seeking pilot collaborations in Ireland across sectors including public services, law enforcement support functions, telecommunications, financial services, media, digital platforms, and services aimed at children and young people.

Those discussions are intended to assess whether real-time monitoring of behavioural risk can reduce harm before exploitation escalates.